Data centers are the invisible cathedrals of our digital age. They host our data, run our applications, and increasingly, train the artificial intelligence models that are redefining our world. But this power comes at a cost, measured in terawatt-hours. The energy consumption of data centers has become a major environmental and economic issue. Faced with this challenge, human ingenuity is pushing the boundaries of physics to find ever more efficient cooling solutions, leading us from the depths of the Earth to the frontiers of space.

In this article, we will explore this critical topic in depth. Based on our analysis, thermal management is the heart of the matter. We will break down traditional methods, dive into game-changing innovations, and even look ahead to a future where our data might be processed in orbit.

Understanding a Data Center's Energy Consumption

To grasp the scale of the problem, one must first understand where all this electricity goes. A data center is much more than a simple collection of servers. It is a complex ecosystem where every component consumes energy:

- IT equipment: servers, storage systems, networking gear. This is the productive core of the data center.

- Support infrastructure: primarily cooling systems, but also uninterruptible power supplies (UPS), lighting, and security systems.

Cooling often accounts for 30% to 40% of a data center's total consumption. Why? Because nearly every watt consumed by a processor is converted into heat. Without effective heat dissipation, components would overheat and fail within minutes.

PUE: The Key Efficiency Metric

To measure a data center's energy efficiency, the industry uses a metric called PUE (Power Usage Effectiveness). Its formula is simple:

PUE = Total facility energy / IT equipment energy

A perfect PUE would be 1.0, meaning 100% of the energy is used to power the IT equipment, with no loss to cooling or anything else. In reality, the average global PUE is around 1.5. However, tech giants like Google and Microsoft, thanks to cutting-edge engineering, boast impressive PUEs approaching 1.1.

Cooling Solutions: From Tradition to Innovation

The race for a lower PUE has given rise to a multitude of cooling technologies. Let's review the most notable solutions, from the most conventional to the most disruptive.

Conventional Methods and Their Limitations

The most common method remains air cooling. The principle is to arrange server racks into hot aisles and cold aisles. Powerful air conditioners (CRACs - Computer Room Air Conditioners) blow cold air into the cold aisles, which passes through the servers to extract heat. The hot air is then drawn from the hot aisles to be cooled again. It is a proven but energy-intensive method, ill-suited for modern, ultra-dense servers that generate intense heat.

Free Cooling: When Nature Lends a Hand

Free cooling is a smarter approach. The idea is to use outside air when its temperature is low enough to cool the water in the cooling circuit or the data center air directly, without having to run the energy-hungry compressors of the air conditioners. In our experience, this is a very effective solution, but it is highly dependent on the climate. This is why many data centers are built in Nordic countries, where cool air is abundant for much of the year.

Immersion Cooling: Plunging Servers into a Liquid Bath

Here, we enter the realm of disruptive technologies. Immersion cooling involves completely submerging servers in a non-toxic, biodegradable dielectric fluid (which does not conduct electricity).

There are two main approaches:

- Single-phase immersion: The fluid circulates around the components, absorbs heat, and is then pumped to a heat exchanger to be cooled before returning to the bath. The fluid always remains in a liquid state.

- Two-phase immersion: The fluid has a very low boiling point (around 50°C / 122°F). When it comes into contact with hot components, it vaporizes. This vapor rises, contacts a cold condenser at the top of the tank, turns back into a liquid, and falls back onto the servers, creating a passive and extremely efficient cooling cycle.

The advantages are spectacular: a PUE that can drop as low as 1.02, a 10-fold increase in computing density, and a complete absence of fans, making server rooms eerily silent.

Waste Heat Recovery: Turning a Problem into a Resource

What if heat, instead of being a waste product to be disposed of, became a resource? This is the principle of waste heat recovery. The hot water from the server cooling circuit can be fed into a district heating network to heat homes, offices, agricultural greenhouses, or even public swimming pools. This approach transforms the data center into an integrated and virtuous component of the local ecosystem, actively participating in the energy transition. This is a major ajor axis that influences the future of work and urban infrastructure.

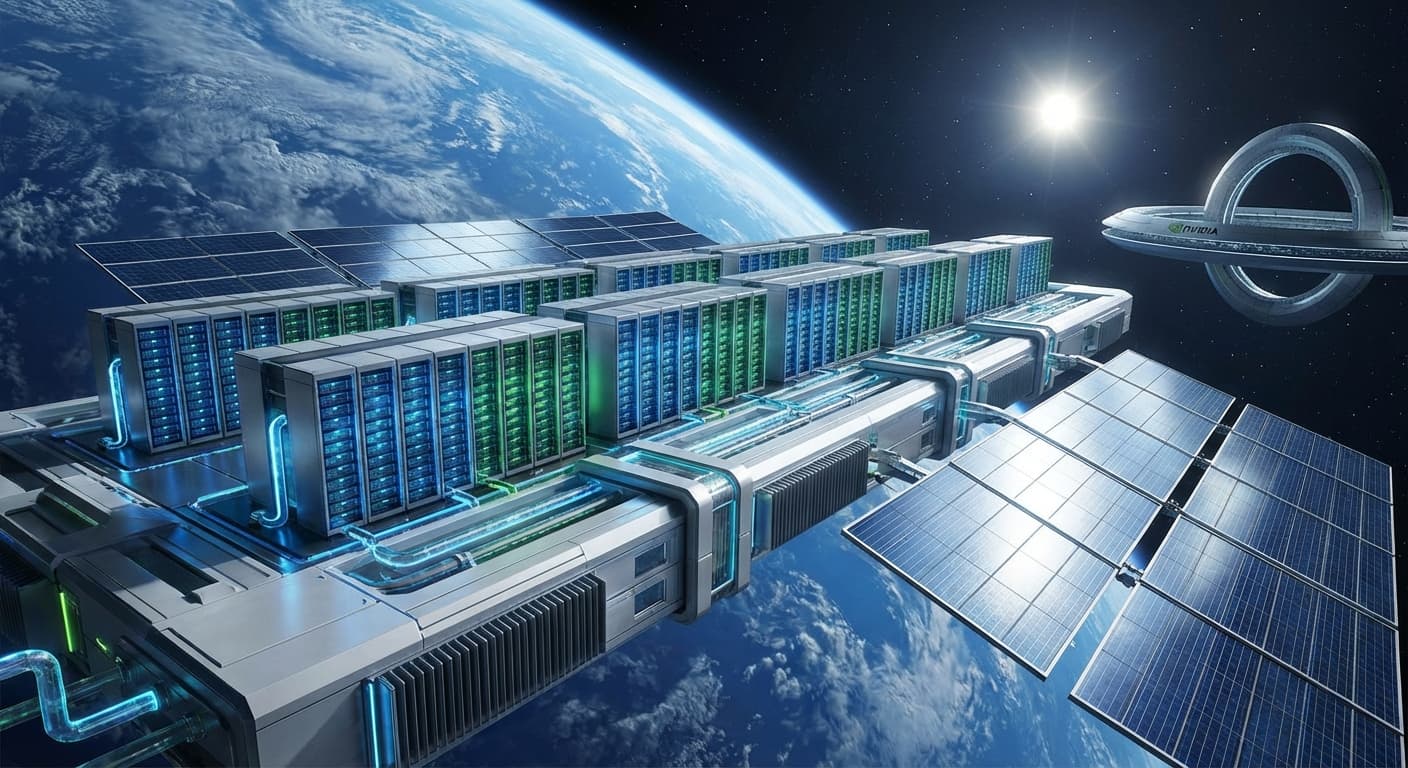

The Final Frontier: The Space-Based Data Center

While we optimize every watt on Earth, some are looking to the sky for the next great leap. The concept of an orbital data center, which was science fiction just a short time ago, is now being seriously studied. This idea, part of the great adventure of space exploration, is based on two fundamental advantages of the space environment.

- An inexhaustible energy source: Solar panels in orbit can capture the sun's energy 24/7, without interruptions from clouds or night.

- A perfect heat sink: The vacuum of space, with its temperature near absolute zero (-270°C / -454°F), is the best radiator imaginable.

Radiative Cooling: The Key to the Orbital Data Center

On Earth, heat dissipates through conduction, convection, and radiation. In the vacuum of space, only transfer by radiation is possible. A space-based data center would therefore be equipped with large radiator panels. These panels, painted with high-emissivity materials, would radiate the servers' heat as infrared waves directly into the frigid vacuum of space.

It is a solution of remarkable elegance and efficiency: no fans, no pumps, no water circuits. Just fundamental physics at the service of thermal dissipation. This cutting-edge space technology could enable the creation of computing centers of unimaginable power, fueled by clean energy and cooled passively.

Of course, the challenges are immense: launch costs, robotic maintenance, protection against radiation and micrometeorites, and communication latency with Earth. However, for non-urgent computing tasks like training complex AI models or scientific simulations, the space-based data center represents a fascinating and potentially sustainable path forward.

Sources and References

To ensure the rigor of this article, we have relied on recognized sources and public data from leading players.

- International Energy Agency (IEA): For global data on data center energy consumption and future projections. Their reports are a benchmark in the industry.

- Google Data Centers (efficiency.google): Google publicly shares its efficiency data, including the PUE of its campuses, offering valuable transparency into industry best practices.

- The Uptime Institute: A leading organization that provides standards, certifications, and research on the design and operation of data centers.

- ADEME (The French Agency for Ecological Transition): The French agency provides analyses and recommendations on the energy efficiency of digital infrastructure within France.